Microsoft 365 Copilot bug bypassed email security controls for users

2026-03-02 15:37:32

newYou can now listen to Fox News articles!

You trust your email security settings for a reason. So, when an AI assistant calmly reads messages marked confidentially and summarizes them, that trust takes a huge hit.

Microsoft says error in Microsoft 365 Copilot It has allowed the AI-powered chat feature to process sensitive emails since late January.

The problem extends beyond the data loss prevention policies that organizations rely on to protect private information. Emails that were supposed to remain closed anyway were simply summed up.

Sign up for my free CyberGuy report

Get the best tech tips, breaking security alerts, and exclusive deals delivered straight to your inbox. Plus, you’ll get instant access to my Ultimate Scam Survival Guide – for free when you join my site CYBERGUY.COM Newsletter

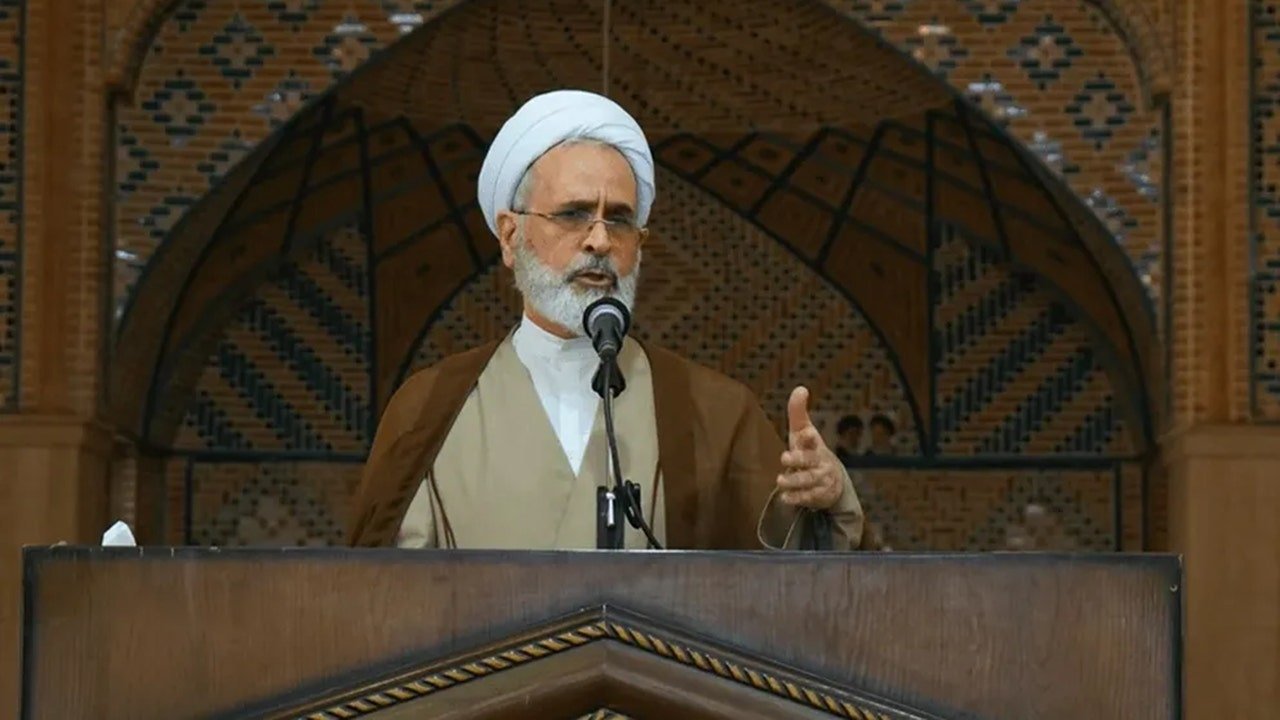

Microsoft 365 Copilot’s business chat interface is at the center of the issue after a bug allowed it to summarize confidential emails. (Microsoft)

Microsoft 365 Copilot error summarizes confidential emails

Microsoft says a coding error affected Microsoft 365 Copilot chat, specifically the Work Tab feature. The AI Assistant helps business users summarize content, formulate responses, and analyze information via Word, Excel, PowerPoint, Outlook, and OneNote.

Starting on January 21, an internal bug named CW1226324 caused Copilot to read and summarize emails stored in the Sent and Draft folders.

The real concern is deeper. Many of these messages carried labels of confidentiality or sensitivity.

Companies apply these labels along with data loss prevention (DLP) policies to prevent automated systems from accessing restricted content. Despite those assurances, Copilot continued to generate summaries.

We reached out to Microsoft, and a spokesperson provided CyberGuy with the following statement:

“We identified and addressed an issue where Microsoft 365 Copilot Chat could return content from user-authored emails marked as confidential and stored within Draft and Sent Items in the Outlook desktop. This did not provide anyone with access to information they were not already authorized to see. Although access controls and data protection policies remained intact, this behavior did not meet the intended Copilot experience, which was designed to exclude protected content from access To Copilot the configuration update has been deployed worldwide to enterprise customers.”

Why the Microsoft 365 Copilot error is important for data security

AI tools seem useful. They save time and reduce busy work. But it also relies on deep access to your data. when Collateral failureSensitive content can move, even temporarily, in ways you didn’t expect.

Your phone is sharing data at night: here’s how to stop it

For businesses, this may mean:

Summarizing legal discussions outside the intended controls

Financial projections were addressed despite the limitations

HR communications are subjected to automated analysis

Even if no data leaves the organization, the bypass itself raises concerns about how AI will integrate with it Enterprise security systems.

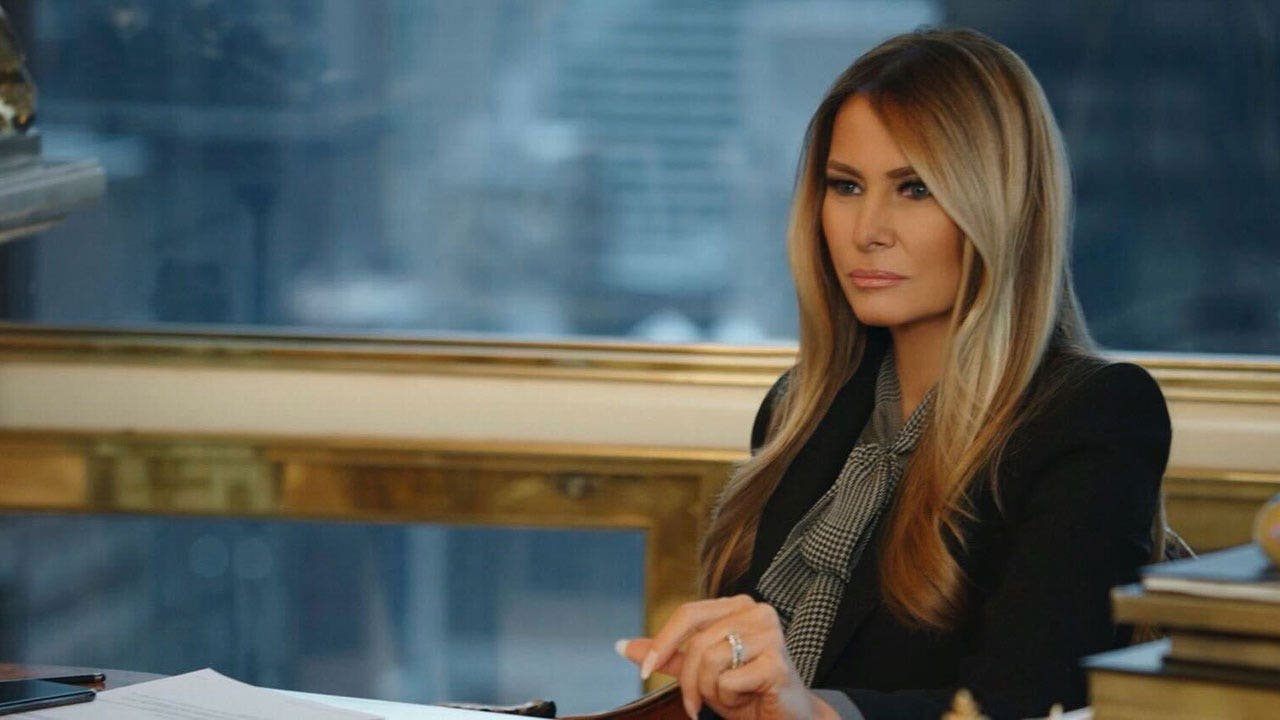

Business users rely on Copilot to simplify work, but a recent bug has raised concerns about how it handles sensitive email content. (Microsoft)

How Microsoft fixes the Microsoft 365 Copilot error

Microsoft says it began rolling out the fix in early February. The company continues to monitor the deployment and is reaching out to some affected users to verify that the fix works.

However, Microsoft has not provided a final timeline for full processing. It also did not reveal the number of affected organizations.

The issue is flagged as an advisory, which usually indicates limited scope or impact. However, many security professionals will need deeper clarity before feeling comfortable.

What the Microsoft 365 Copilot issue reveals about AI security

This incident highlights something that many companies are grappling with right now. AI assistants sit inside productivity platforms. They need access to email, documents, and collaboration tools to work well.

TIKTOK Aftermarket in the US: What Has Changed and How to Use It Safely

At the same time, these platforms contain your most sensitive information. When AI features expand rapidly, security policies must evolve just as quickly. Otherwise, even a simple error in the code can lead to unexpected exposure.

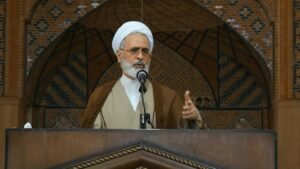

Copilot’s chat feature was designed to enhance productivity, but a bug in the code allowed it to process emails marked as confidential. (Microsoft)

Ways to stay safe after Microsoft 365 Copilot error

If your organization uses Microsoft 365 Copilot, here are practical steps to reduce risk:

1) Review the co-pilot access settings

Work with your IT team to ensure which folders and data sources Copilot can access.

2) Re-check DLP policies

Test sensitivity labels and DLP (Data Loss Prevention) rules to ensure they are preventing AI processing as intended.

3) Monitor advisory updates

Stay up to date with Microsoft service alerts and verify that the fix is fully deployed in your tenant.

4) Limit the scope of artificial intelligence during investigations

If you have concerns, consider temporarily restricting Copilot features until the verification process is complete.

5) Train employees on the limits of artificial intelligence

Remind employees that AI assistants can process drafts and send messages. Encourage careful handling of sensitive content.

6) Auditing the co-pilot’s activity records

Review audit logs to see if Copilot accessed or summarized classified emails. This helps determine actual exposure rather than assumed risk.

7) Review the sensitivity label configuration

Make sure you configure secret labels to prevent AI processing when needed. Misconfigured labels can create gaps even after the bug is fixed.

8) Re-evaluate retention and formulate policies

Since the issue is with sent items and drafts, evaluate whether sensitive drafts should be stored long-term or deleted after sending.

9) Limit Copilot to specific user groups

Instead of enabling Copilot at the organization level, consider a phased deployment to departments with less exposure.

10) Conduct a post-accident security review

Use this moment to reevaluate how AI tools integrate with compliance controls. Treat it as a learning opportunity rather than a one-time glitch.

Pro Tip: This Copilot bug focuses on enterprise controls. However, AI tools run on your devices and accounts, so keeping software up to date and using powerful antivirus software adds an important layer of defense. Get my picks for the best antivirus protection winners of 2026 for Windows, Mac, Android, and iOS at Cyberguy.com

Consider a more private email provider

Enterprise AI mistakes raise a bigger question: How much access should email platforms have to your data in the first place? If you want an extra layer of privacy beyond the major providers, privacy-focused email services are worth exploring.

Some offer end-to-end encryption, support for PGP encryption, and a strict ad-free business model that avoids scanning messages for marketing purposes.

Wearable AI helps stroke survivors talk again

Many of them also allow you to create disposable email aliases, which can reduce spam and limit exposure if a single address is compromised.

Although no provider is immune to bugs, choosing an email service built on privacy rather than data monetization can limit the amount of information automated systems have access to in the first place.

For individuals, journalists, and small businesses in particular, this extra control can make a meaningful difference.

For recommendations on private and secure email providers that offer aliases, visit Cyberguy.com

Key takeaways for Kurt

AI assistants are becoming part of everyday work life. They promise speed, efficiency, and smarter workflows. But convenience should never trump security.

This co-pilot error may have a limited effect. However, it serves as a reminder that AI tools are only as powerful as the barriers behind them.

When these barriers slip, even briefly, sensitive information can move in unexpected ways. As AI becomes increasingly integrated into business software, trust will depend on transparency, quick fixes, and clear communication.

Here’s the real question: If your AI assistant can see everything you type, are you completely confident that it respects every boundary you set? Let us know by writing to us at Cyberguy.com

Click here to download the FOX NEWS app

Sign up for my free CyberGuy report Get the best tech tips, breaking security alerts, and exclusive deals delivered straight to your inbox. Plus, you’ll get instant access to my Ultimate Scam Survival Guide – for free when you join my site CYBERGUY.COM Newsletter

Copyright 2026 CyberGuy.com. All rights reserved.

https://static.foxnews.com/foxnews.com/content/uploads/2026/02/microsoft-360-emails_03.jpg

إرسال التعليق